AI: Humanity's

Last Tool.

Estimated reading time: 35 minutes. Yes, really. Get a coffee. Maybe two.

Disclaimer: The thoughts and ideas expressed here are “my own” — things I have stumbled upon in my short 35-year lifespan as an oxygen-to-carbon-dioxide-transforming life form on planet Earth. Some arrived from outside — books, videos, a random blog at 2am — and some bubbled up from wherever ideas come from. The writing is a collaboration with Claude, an AI made by Anthropic. Make of that what you will. Call it Vibe Coded Philosophy.

Let me start with a thing you probably haven’t thought about today: your skull.

Specifically, the fact that everything you consider you — your opinions, your memories, your sense of humor, your irrational hatred of open-plan offices — is currently contained inside a roughly 1.4-kilogram lump of electrochemical tissue sitting in a bony case on top of your neck.

That’s the deal. You’re in there. The rest of the world is out there. The skull is the border.

Or at least, that’s the story we’ve been telling ourselves for a very long time.

I’m not sure it’s true anymore. And I’m starting to think it might never have been.

Let’s go back. Way back. Before the internet, before books, before writing — back to a time when the most advanced information system on Earth was a cluster of slightly alarmed humans sitting near a fire, trying very hard not to become part of the food chain.

These humans had brains built on much the same general plan as yours: good at pattern recognition, excellent at anxiety, and fully capable of dedicating an unreasonable amount of attention to a noise in the bushes that turned out to be wind.

Everything they knew — and I do mean everything — existed only inside human heads. Not one head, to be fair, but a loose, unreliable, occasionally distracted network of them. A sort of biological cloud storage system, except the cloud could wander off, forget things, or get trampled by a mammoth.

You learn something useful — for example, that the cheerful-looking red berries near the river will cause your digestive system to reenact a small but memorable apocalypse. Excellent. Valuable information. Ideally, something others should know.

So you don’t write it down. You can’t. Instead, you make a face. A very specific face. You point. You perhaps make a noise that roughly translates to “absolutely not.” Other people observe, infer, and, if all goes well, decide they would prefer not to experience the berry situation personally.

And just like that, knowledge spreads.

Not cleanly. Not perfectly. But effectively enough that your children grow up with a strong, almost philosophical aversion to those berries, despite never having suffered them. Which is, when you think about it, a fairly impressive trick for a species without notebooks.

Still, there’s a catch.

This entire system — all accumulated wisdom about food, water, tools, and which things will definitely try to eat you — is socially shared but biologically stored. Passed from person to person through teaching, imitation, gesture, and memory. And therefore much more fragile than it has any right to be. There are no backups. No archives. If a group disappears, or collectively forgets that one extremely important berry-related lesson, the universe does not send a polite reminder. It simply waits.

And so, for something like 200,000 years, humanity ran on this: a jittery, talkative, error-prone network of minds, constantly syncing, occasionally crashing, and somehow — against fairly strong odds — managing to keep enough critical information alive to make it to the next generation.

A Very Quick History of Stuff We Put Outside Our Heads

Then, somewhere in the fog of deep prehistory, somebody did something that doesn’t look like much but might be one of the most important things a human ever did.

They made a mark.

A notch on a bone. A scratch in the dirt. A handprint on a cave wall. We don’t know exactly when or where, and the person who did it almost certainly didn’t understand what they’d done. But what they’d done was this: they had taken a piece of information that existed inside their head and placed it outside their head, in a form that could survive without them.

What marks changed was not the existence of sharing — people had been sharing knowledge through imitation, gesture, and teaching for as long as there had been people — but the durability of it. Knowledge could now outlast the body that first learned it.

I want to dwell on this for a second because I think we massively underrate it. That notch on a bone is, philosophically speaking, one of the most radical things that has ever happened on this planet. Every other species is stuck in the old deal: learn it, store it in the brain, lose it when you die. Humans broke out of that loop. We figured out how to make thoughts persist in the physical world — how to cheat cognitive death.

And once the trick was discovered, we couldn’t stop doing it.

Language — real, structured, complex language — was the next great upgrade. Language didn’t invent sharing; it transformed it by making communication far more precise, abstract, and portable. Suddenly you could encode meaning with extraordinary specificity. You could tell someone about the red berries without them having to eat the red berries. You could coordinate a hunt across terrain nobody in the group could see. You could tell a story about something that happened before anyone listening was born. You could lie. (Lying, by the way, is underrated as a cognitive milestone. It means you can model what another person believes, predict how they’ll react, and intentionally manipulate their internal state. That’s a staggering piece of mental machinery, and we mostly use it to say “sorry, I didn’t see your message.”)

Then writing, which was language that didn’t need a living speaker. Suddenly a thought could travel not just between skulls but across time. A dead person could put an idea into the head of a person who hadn’t been born yet. That’s essentially magic, and we’ve gotten so used to it that we make children do it for homework.

Then the printing press, which was writing that could replicate itself. Then libraries, then encyclopedias, then search engines — each one a step in a long, accelerating project of moving human knowledge and thinking outside of individual human brains.

Here’s the thing I want you to hold onto, because it’s going to matter a lot later: every time this happened, it didn’t just add a new storage medium. It changed the relationship between a person and their own thinking.

The person with a written shopping list isn’t just the same person plus a piece of paper — they’re a slightly different cognitive arrangement. Some memory work is now offloaded into the environment, which can make the skull-bound picture of cognition less adequate in practice. The person navigating with GPS hasn’t just gained a better map — they’ve outsourced an entire cognitive task (spatial reasoning, route planning, the whole thing) to an external device, and in doing so they’ve freed up mental bandwidth for other things. Or lost the ability to navigate without it. Or both.

Each time we externalize a piece of cognition, we change what it means to be a thinking person. We’ve been doing this so long we’ve stopped noticing. We just call it “writing things down” or “looking it up” and move on.

We probably should have been paying more attention.

The Thing About Tools

Now.

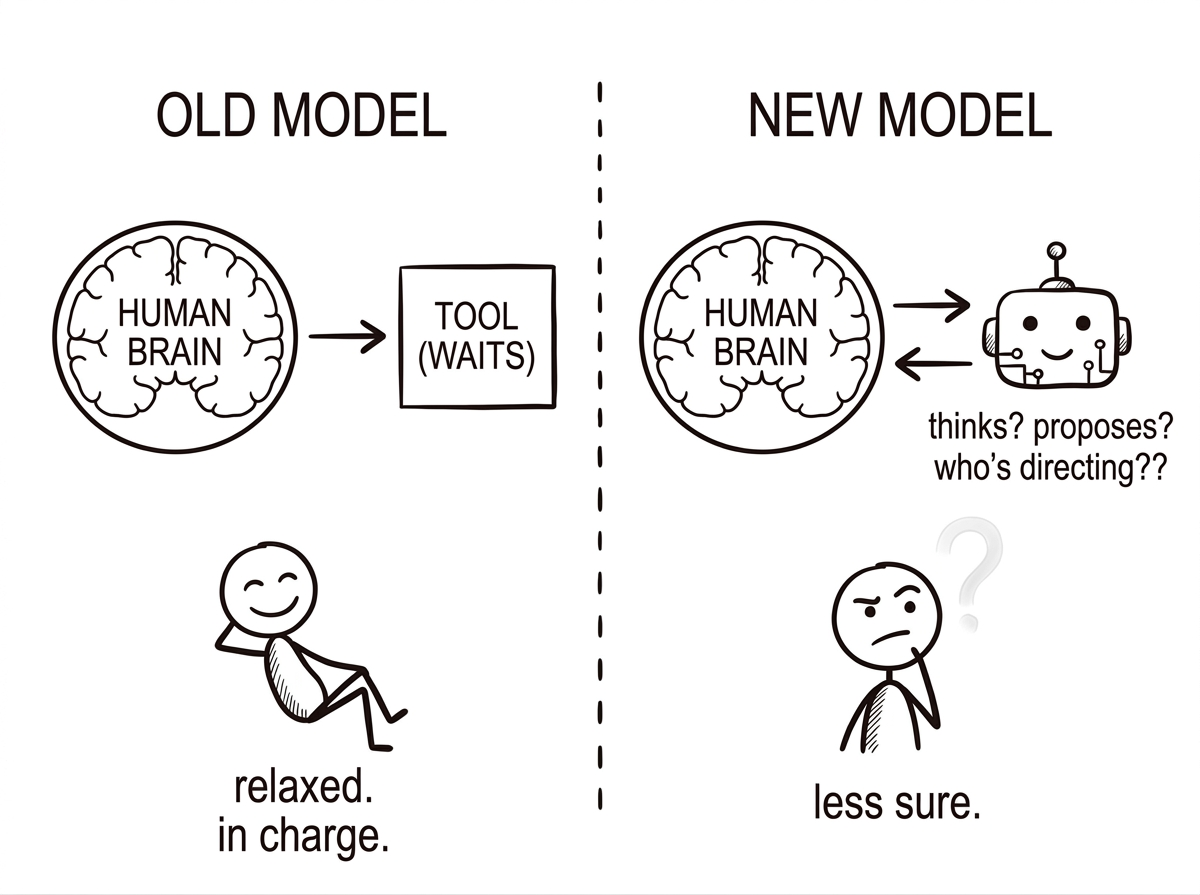

Humans are famously good at tools. We use them, we love them, we define ourselves by them — there’s a whole stretch of prehistory literally named after the rocks we hit together. But every tool we’ve ever made, until very recently, shared one property that was so universal we never bothered to name it:

Every tool in history was stupid.

I don’t mean this dismissively. I mean it literally. A hammer has zero understanding of what it’s hitting or why. You can use the same hammer to build a nursery or to smash a window, and the hammer won’t know the difference. It has no model of the world. No preferences. No memory. No ability to adjust. It’s a shaped piece of metal. It sits there, being dense, waiting for you to give it purpose.

And this was true of every tool humans ever built — right up until about five minutes ago, historically speaking. A wheel doesn’t know where it’s going. A loom doesn’t care what pattern it’s making. A steam engine has no opinion about what it’s powering. Even a computer — a genuinely spectacular machine, the greatest tool we ever built — just executes instructions that a human wrote. You told it what to do, step by step, in excruciating detail. The intelligence was always, always, always on the human’s side. The tool was just leverage. Dumb, reliable, obedient leverage.

This arrangement — smart human, dumb tool — is so deeply baked into how we think about technology that it’s basically invisible. We never bothered to name it, the way fish don’t bother to name water.

But water is worth naming when you’re suddenly not in it anymore.

Here’s what happened: at some point in the last few years, tools started thinking. And I don’t mean “processing faster” or “following more complicated instructions.” I mean something no previous tool ever did: forming representations of problems, generating approaches that weren’t pre-programmed, and adjusting when those approaches don’t work.

Let me give you a specific example, because the abstract version doesn’t capture how strange this actually is.

Last month I asked an AI to help me untangle a topic I was confused about. I gave it a rough, badly-formed question — something like “I keep hearing about X and I don’t understand what’s actually going on.” The AI went and found relevant papers. Read them (faster than I could). Identified which ones disagreed with each other and on what specific points. Synthesized the competing positions into a framework I hadn’t seen anywhere. Then — and this is the part that made me put my coffee down — it noticed a gap in my question. Something I hadn’t thought to ask about but that was actually the crux of the whole issue. It raised it. I said “actually, yeah, the part I really care about is…” and it revised its entire analysis on the fly.

Two minutes. Hundreds of small decisions I didn’t specify and couldn’t have predicted.

That is not a hammer. A hammer doesn’t notice you’re asking the wrong question. A hammer doesn’t read the papers and come back with “actually, I think the interesting thing here is something you didn’t ask about.” A hammer doesn’t revise its approach mid-task because the context shifted.

That’s something else. And we don’t have a good word for it, which is part of the problem — because when you don’t have a word for something, you jam it into the nearest available category. And the nearest category is “tool.” But calling this a tool is like calling a conversation partner a telephone.

Let Me Introduce You to The Accountant

Inside your head, right now, there’s a part of your brain I’m going to call The Accountant.

The Accountant has one job: keeping the books on what’s “you” and what’s “not you.” It’s a full-time position. The Accountant takes it very seriously. You look in a mirror — The Accountant notes that the reflection is you. You pick up a pen — The Accountant notes that the pen is a tool, not you. You get an idea while talking to a friend — The Accountant files that under “your idea,” even though it emerged from a conversation with someone else. (The Accountant is comfortable with some creative accounting. We’ll get to that.)

For most of human history, The Accountant had a very easy job. Two columns: ME and NOT ME. The boundary is the skull. You are the thing inside. Everything else is the world outside. Clean books. Simple ledger. The Accountant could practically do it with eyes closed.

Now The Accountant is having what you might call a professional crisis.

Because AI is putting transactions on the desk that don’t fit in either column. And they keep coming faster.

Here’s one: you use an AI to think through a problem. You give it your messy, half-formed thoughts. It suggests a framing you’d never have arrived at on your own — a way of seeing the problem that makes everything click. That framing becomes the way you understand the issue from now on. Three months later, you explain it to someone at dinner and it feels completely like your insight. You’d be surprised if someone told you it wasn’t. Whose insight is it?

Here’s another: you write something with an AI. A piece of work you care about. You brought the intent, the direction, the core ideas. The AI found the right words for things you were struggling to express. It suggested a section you hadn’t planned that turned out to be the strongest part. The final thing says exactly what you wanted to say, better than you could say it alone. Is it yours? Is it the AI’s? Is it… both? Neither?

The Accountant stares at the ledger. The Accountant has no idea what column to put any of this in.

Here’s The Thing About You

Coming back to The Accountant’s inconsistency. Here it is.

The Accountant has always been kind of sloppy about the “you” category. Way sloppier than we admit.

Think about how you formed your opinions. Almost certainly, a large portion of what you believe came from other people. Your parents. Your teachers. Books you read. Friends who argued with you over dinner. Podcasts you listened to while doing dishes. At some point those ideas got in, mixed with the existing stuff, and came out the other side feeling like yours. You hold them as your own. You defend them as your own. Functionally, they are your own.

But they weren’t always. There was a moment — fuzzy, unmemorable — when they arrived from outside.

Now think about your memories. You probably have a few vivid memories from childhood. Psychologists will tell you, somewhat disturbingly, that a significant portion of what you “remember” is actually a reconstruction — your brain filling in gaps with plausible guesses, influenced by what you’ve been told about the event, how you’ve retold it, and what fits the narrative you have about yourself. You didn’t store a video and replay it. You stored fragments and inferences, and each time you “remember,” you’re partially inventing.

But it goes deeper than opinions and memories. It goes all the way down to perception itself.

Robert Anton Wilson — novelist, philosopher, and professional disturber of certainties — spent most of his career circling a single uncomfortable idea: that you don’t perceive reality. You perceive your model of reality. And your model was built from the outside in.

Wilson called this your reality tunnel. Your nervous system receives a staggering flood of raw sensory data every second — far more than it could ever process — and it filters, compresses, and interprets that data into something manageable. What you experience as “seeing the world” is actually “seeing your nervous system’s edited version of the world.” The editing is done using tools you didn’t choose: the language you think in, the culture you were raised in, the things you’ve been taught to notice and the things you’ve been taught to ignore, the emotional experiences that left the deepest grooves in your neural circuitry.

Two people standing in the same room, looking at the same thing, with access to the same raw data from the physical world — they are not, in any deep sense, having the same experience. They’re each running the room through their own reality tunnel and arriving at a different room. Neither is wrong, exactly. Both are approximations. Neither is reality itself.

“I don’t believe anything,” Wilson once wrote, “but I have many suspicions.”

This isn’t mysticism — or at least not only mysticism. It maps onto what neuroscience has been saying for decades about predictive processing: the brain isn’t a camera, it’s a prediction machine. It’s constantly generating its best guess about what’s out there, and updating when the world fails to match the guess. What you see isn’t raw input. It’s a hypothesis your brain has been running for your entire life, revised continuously by everything that’s arrived from outside.

Which means your experience of reality — not just your opinions, but the very texture of what seems obvious and self-evident and real to you — has been constructed, in significant part, from outside. From inputs that arrived before you were old enough to question them. From the language that shaped which distinctions you could even make. From the people around you who showed you, implicitly, which things were worth noticing and which could safely be ignored.

Wilson’s point — and it’s a dizzying one when it fully lands — is not that you don’t exist, but that you are far less the author of your own experience than you feel like you are. The “you” that seems to be reading this right now, with its particular sense of what matters and what’s real, is the output of a lifetime of external input passing through a nervous system you inherited and didn’t design.

Your “self” is already an ongoing collaborative construction. It always has been. The inputs were other people, culture, language, books, the specific reality tunnel you were handed at birth and have been modifying ever since. The output — this person you experience as “you” — is the synthesis.

Seen this way, AI isn’t introducing some alien kind of influence on cognition. It’s a new kind of input into a process that was built, from the ground up, to take inputs from everywhere. The synthesis is still happening. The person doing the synthesizing is still you. But the raw material now includes a new kind of source — one that happens to carry the accumulated thinking of billions of other reality tunnels inside it.

I find this either very reassuring or slightly vertiginous depending on the day.

The Case for The Extended Mind Thesis

Here’s something cognitive scientists have known for about thirty years that the rest of us haven’t really absorbed:

Your thinking doesn’t happen only in your brain.

I know. That sounds like something you’d read on a crystal-healing website. It’s not mysticism — it’s a well-established position in cognitive science, and once you hear the argument for it, it’s annoyingly difficult to refute.

The key paper was published in 1998 by two philosophers, Andy Clark and David Chalmers. They asked a question that seems simple and turns out to be a grenade: where does the mind stop and the rest of the world begin?

Their argument centers on a guy named Otto. Otto isn’t real — he’s a thought experiment — but think of him as real, because the point lands harder that way. Otto has early-stage Alzheimer’s. His biological memory is unreliable. So he carries a notebook everywhere, and whenever he learns something important — an address, a fact, a plan — he writes it down. When he needs the information later, he opens the notebook and looks it up. The notebook goes everywhere Otto goes. He trusts it completely. Consulting it is automatic, habitual — as natural as the rest of us reaching into our memory.

Now, here’s the setup. Otto hears about an exhibition at the Museum of Modern Art and decides to go. He opens his notebook, finds the address he wrote down weeks ago, and walks to the museum.

Meanwhile, a woman named Inga — normal memory, no notebook — also decides to go. She thinks for a second, recalls from memory that the museum is on 53rd Street, and walks there.

Clark and Chalmers ask: what is the relevant difference?

Both had the information stored before they needed it. Both accessed it when they needed it. Both used it to guide their behavior in exactly the same way. The only difference is that Inga’s information was stored in neurons and Otto’s was stored in ink on paper. If we’re comfortable saying Inga believed the museum was on 53rd Street even before she consciously thought about it — because the information was sitting in her memory, ready to be accessed — why wouldn’t we say the same about Otto? His information was sitting in his notebook, ready to be accessed, in exactly the same functional way.

Clark and Chalmers said: we should bite the bullet. Otto’s notebook is part of Otto’s mind. The boundary of the mind isn’t the skull — it’s wherever the cognitive process actually happens.

Most people, when they first hear this, have two reactions in rapid succession. The first is: that’s obviously wrong, the mind is the brain, everybody knows that, what are these philosophers smoking. The second, which arrives about thirty uncomfortable seconds later, is: wait. I’m not sure how to argue against that.

The second reaction is the one to pay attention to.

Because think about how you actually think. Not the idealized version — the real, messy, daily version. You scribble on paper while working through a problem, because the act of writing changes what you think. You talk to yourself in the shower, because hearing your own reasoning out loud restructures it. You pace. You rearrange objects on a desk to make a decision. You search the internet in the middle of forming an opinion, and what you find literally changes what you end up believing. You remember appointments because your phone buzzes. You remember conversations because you reread old messages. You “know” facts that you actually can’t recall at all — but you know exactly which search query will find them in three seconds, and functionally that’s almost the same thing.

How much of what you experience as “your thinking” is actually a loop between your brain and the world? How much of it depends on external things — notebooks, phones, other people, physical environments — so fundamentally that if you removed the external part, the thought process wouldn’t just slow down but would collapse entirely?

More than you’d like to admit. Way more.

The skull isn’t a wall. It’s more like a particularly important node in a much wider cognitive network. And the mind has been leaking out of it for a very long time — through language, through writing, through every technology we’ve ever used to think with. The boundaries were always blurrier than we pretended.

We just didn’t have to confront it, because all the external stuff was dumb. Notebooks don’t talk back. Search engines don’t suggest better questions. Your calendar doesn’t notice that you’ve been in meetings for six hours and maybe you should stop scheduling things for a while.

Now something out there does talk back. It generates, it reasons, it adapts, it surprises. And The Accountant, who was already having a bad day, just realized the problem is much bigger than AI. The ledger was always a fiction. The columns never really worked. The mind was never fully inside the skull to begin with.

AI didn’t break the boundary between inside and outside. It just made the breach impossible to ignore.

The Three Models

When people talk about this stuff, they tend to reach for one of three mental models:

Model 1: Human vs. AI. AI is the other. The competitor. The thing that replaces humans. You’re on one side, it’s on the other. This model generates most of the scary headlines. It imagines a war.

Model 2: Human using AI. AI is a tool. A very sophisticated one, sure, but ultimately just a thing you pick up and put down. You’re the driver. The AI is the car. Clean separation. Nothing philosophically interesting to see here.

Model 3: Human + AI as one system. There’s no clean separation. The relevant unit isn’t the human or the AI — it’s the hybrid. The thing that thinks and acts is the whole loop: the human’s goals, intuition, and judgment; the AI’s scale, speed, and generation; together, a system that neither component could constitute alone.

Model 1 is exciting for movies and emotionally satisfying in a fight-club way, but it fundamentally misreads what AI actually is. It’s not a separate agent with its own ambitions lurking on the other side of the table. It’s a system humans built, for human purposes, that does something no previous tool did: it thinks alongside you.

Model 2 is comfortable. It preserves the old story. Human in charge, tool in hand, no awkward philosophical questions about where the mind ends. But I think it’s becoming less and less accurate as AI gets more capable. When your “tool” is doing most of the cognitive heavy lifting on a task, calling it a tool starts to feel like calling a co-author your “pen.”

Model 3 is uncomfortable. It requires updating some intuitions that feel very fundamental — about agency, authorship, identity. The Accountant hates Model 3. The Accountant is not doing well.

But I think Model 3 is where we actually are. Or at least, where we’re headed fast. And it’s not because AI is becoming more human. It’s because the human-AI loop is becoming a unified cognitive process — one with two very different components that complement each other in ways that make the output irreducible to either.

You bring intent. Direction. Values. The feeling that something isn’t quite right, that a framing is missing something, that this sentence doesn’t land yet. Taste. Judgment. The weird, non-articulable sense of what matters.

AI brings scale. Speed. Pattern recognition across more information than you could process in a lifetime. The ability to hold dozens of variables simultaneously. Tirelessness. But also a different set of blindspots than yours.

The output of this collaboration isn’t “your work plus some AI assistance.” It’s a third thing. Something that carries your intent and judgment but was shaped by a cognitive process larger than what your brain can do alone. It’s yours in the way your opinions are yours — arrived at through a process that was always collaborative, always drawing on external inputs, always more than just one brain in a skull.

This is not a futuristic prediction. This is what’s happening right now, today, for millions of people who think with AI the way a previous generation thought with Google — as a natural extension of the cognitive process. The only difference is that this extension thinks back.

Actually, Let’s Back Up Even Further (Like, 4 Billion Years Further)

I want to zoom way out for a second. Not just to the beginning of brains. To the beginning of anything that could plausibly be called information processing on this planet.

About 3.8 billion years ago, something happened in some warm puddle or deep-sea vent that we still don’t fully understand: chemistry became biology. Molecules started copying themselves. This was, in retrospect, the first information-processing system on Earth. Not intelligent. Not even close. But it was a system that could store instructions, execute them, and — crucially — improve them over time through variation and selection.

For the next 3 billion years or so, life was mostly single cells. But those cells were doing something remarkable: they were getting better at processing information about their environment. Sensing chemical gradients. Responding to light. Moving toward food. Away from danger. Very slowly, across vast stretches of time, the systems got more sophisticated.

Then, around 500 million years ago, evolution made a move that changed everything: it started concentrating information processing into dedicated structures. Nerve clusters. Ganglia. And eventually — brains.

Here’s the thing I keep coming back to: this didn’t happen once. It happened over and over, in a pattern that looks almost deliberate if you squint (it isn’t — evolution is blind — but the pattern is striking).

Brains didn’t appear fully formed. They accreted. And there’s a popular story about how they accreted that you’ve almost certainly heard, because it’s the kind of story that’s so satisfying it feels like it must be true.

It goes like this. The oldest part of your brain — the “reptilian brain” — handles the basics: breathing, heart rate, the fight-or-flight response. It’s been doing this job for hundreds of millions of years. It’s very good at it. It doesn’t care about your feelings, your career anxieties, or what you’re going to have for dinner. It just keeps the lights on.

On top of that came the limbic system — the emotional layer. Fear. Pleasure. Bonding. Social attachment. This is what makes mammals different from fish. It’s the layer that makes you love your dog and dread public speaking and feel a complicated mix of things when you see an ex at a party.

And then, very recently in evolutionary terms, came the neocortex. The wrinkled grey outer layer. The part that does language, planning, abstract reasoning, creativity, mathematics, philosophy. The part that can imagine the future, model other people’s minds, and write a blog post about its own cognition. (Wow. Such Meta.)

Three neat layers. Three clean departments. A tidy evolutionary org chart where the reptile runs building maintenance, the mammal handles HR and social events, and the neocortex sits in the corner office doing strategic planning.

It’s a beautiful model. It was popularized in the 1960s by neuroscientist Paul MacLean, who called it the “triune brain.” And here’s the thing:

It’s mostly wrong.

I know. I’m sorry. I liked it too. But contemporary neuroscience has been quietly dismantling this story for decades, and the real picture turns out to be — as is often the case in science — messier, less satisfying, and actually more interesting.

The problem is that brains didn’t evolve by bolting three clean modules on top of each other like evolutionary aftermarket upgrades. They evolved through continuous modification of shared ancestral structures. The “reptilian” bits and the “mammalian” bits aren’t separate departments — they’re more like roommates who share every room and argue constantly about the thermostat. Fish have homologues of brain structures we used to call “uniquely mammalian.” Emotion and reason aren’t neatly separated into different floors of a cerebral apartment building — they’re tangled together at almost every level, influencing each other in ways that make the triune model look like a charming but misleading cartoon.

So what’s the actual story?

The actual story is that over deep evolutionary time, nervous systems became more complex, more interconnected, and capable of more sophisticated information processing — not through neat sequential upgrades, but through a long, messy process of elaboration, modification, and expansion. Evolution didn’t stack three clean layers. It took existing circuits and tinkered — extending here, repurposing there, wiring new connections between old parts, occasionally creating something genuinely novel but always building on what was already running. Less like an architect designing floors and more like a very ambitious handyman who keeps adding rooms to a house without ever stopping to draw a blueprint.

And here’s the part the triune model did get right, even if it got the mechanism wrong: the newer, more complex capabilities don’t replace the older ones. They coordinate with them, argue with them, and sometimes lose to them. Your capacity for abstract reasoning and your capacity for panic are not sitting in separate departments — they’re deeply interconnected systems that constantly negotiate. Which is why you can stress-eat an entire bag of chips while intellectually knowing it’s a bad idea. That’s not your “reptilian brain” overpowering your neocortex. It’s more like a committee where every member has access to every other member’s files, nobody fully agrees on priorities, and the member who cares most about immediate caloric intake occasionally filibusters the one who read that article about cholesterol.

(If anything, the real picture is funnier than the triune model. It’s not a neat hierarchy where the rational brain sometimes gets outvoted. It’s an overlapping, cross-wired, perpetually arguing coalition of ancient and modern circuits, all operating simultaneously, all influencing each other, and absolutely none of them fully in charge. The “you” that emerges from all this crosstalk is less like a single speaker and more like a synthesis — a mirage assembled, moment to moment, from a committee that never stops meeting in the backround.)

What the evolutionary record does show, at least on this planet, is a general trend of repeated elaboration: when the existing cognitive hardware hit its limits, evolution tended to expand and modify rather than redesign from scratch.

Now zoom all the way back out. Look at the whole 4-billion-year sequence as a single process. What do you see?

You see a planet that keeps producing systems that are better and better at processing information. As if the whole thing — life, evolution, brains, culture, technology — is one long project with a direction. Not a purpose. Not a plan. But a direction.

The Accountant does not like this framing. The Accountant prefers to think of evolution as random and purposeless, which is technically correct at the level of mechanism but misses the larger pattern. The pattern is: on at least one planet, matter organized itself into increasingly complex information-processing systems, repeatedly, across billions of years, through multiple independent mechanisms. First chemistry. Then biology. Then neurology. Then culture and technology. Each layer faster than the last. Each one building on what came before.

Is it meaningful to ask where this is going? I think it might be. Not “what does the universe want” — that’s a question for mystics, and I am not qualified. But “what does the trajectory suggest if you just draw the line forward” — that feels like a fair question. And the answer is: more integration. Tighter coupling between the layers. Faster feedback loops. A system that keeps folding back on itself, getting better at the thing it’s been doing since the first replicating molecule: understanding and responding to the world.

Now here’s where it gets interesting.

For most of human history, the additions to cognitive capacity happened inside the biology. Evolution worked its slow magic on the genome, neurons reorganized, the prefrontal cortex expanded, and presto — a smarter ape. The hardware upgrades happened in the meat.

But that process is glacially slow. Evolution doesn’t care about your timeline. It took millions of years to get the neocortex. If we were waiting for biology to provide the next cognitive upgrade, we’d be waiting a very, very long time.

So humans did something remarkable: we started upgrading outside the biology instead.

Language was the first move. Writing was the second. Every technology since has been another step in the same direction — extending the reach of the neocortex outward into the world, so that more thinking could happen without requiring a bigger skull. (There’s a hard physical limit on skull size, by the way. It has to fit through the birth canal. This is actually a significant constraint on biological brain expansion. The universe has a cruel sense of humor.)

Seen through this lens, the library isn’t just a building full of books. It’s a distributed extension of human long-term memory — holding far more than any individual brain ever could, and sharing it across time and space. The internet isn’t just a communications network. It’s something closer to a global working memory — a space where ideas, retrieved by millions of brains simultaneously, interact and evolve at a speed no biological neuron could match.

And AI? Here’s the frame I keep coming back to:

AI might be the first technology that doesn’t just store or transmit thought — it generates and reasons. Which makes it less like a library and more like… a new cortex layer, a new member to the cognitive party.

Not a cortex inside a skull. A cortex that is distributed, external, and shared. One that is — unlike the neocortex — not the product of millions of years of blind selection pressure, but something we built, intentionally, in a few decades.

This is a very strange thing to have done. And I’m not sure we fully appreciate how strange it is.

Think back to that notch on a bone — that first mark someone made to record something that had happened. Maybe it was the number of days since the herd of prey last moved through the valley. One person’s knowledge, scratched into the world so it wouldn’t have to live only in one skull.

AI is that notch. Except instead of recording one fact for one person, it holds all the knowledge and skills humanity has ever acquired — and brings all of it, instantly, to every human mind on the planet.

Same trick. Running at a scale that person by the fire could not have imagined in a thousand lifetimes.

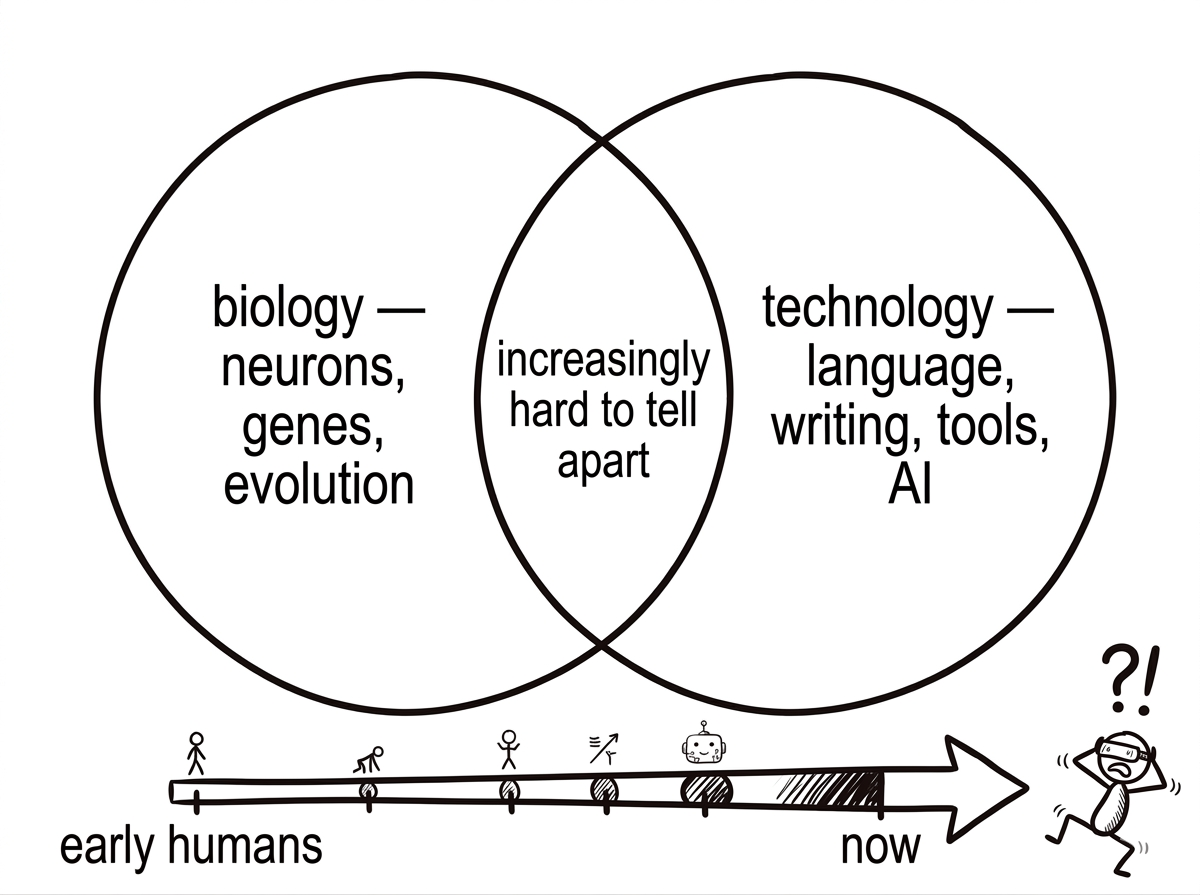

Biology and Technology Are Merging (And Have Been For a While)

There’s a framing I find useful here, though it sounds more dramatic than I intend it to: the boundary between biology and technology is dissolving.

Not in a cyborg science-fiction way — though that too is happening, slowly. In a more mundane and therefore more profound way. The things we build and the way we think have been co-evolving for so long that they’re no longer meaningfully separable.

Consider language. Is language a biological thing or a technological thing? It’s both, inseparably. The capacity for language is biological — hard-wired into the structure of the brain, present in infants, coded into the genome. But any specific language — Finnish, Mandarin, English — is cultural technology, transmitted across generations, evolving over time, shaping the thoughts that can be thought inside it. Your brain grew up inside a language. The language shaped what your brain became. You can’t cleanly separate the two.

Or consider reading. For most of human evolution, there was no such thing. The brain had no “reading module.” Then we invented writing, and over a few thousand years, something remarkable happened: the brain rewired itself to accommodate it. The fusiform gyrus — a region originally used for object and face recognition — got partially repurposed to recognize letter shapes. Literacy literally changes the physical structure of the brain. The technology got into the biology. The separation between them is not as clean as we thought.

Now extend that logic forward. Each generation grows up inside a different technological environment, and that environment shapes cognitive development. Children who grow up with touchscreens navigate spatial interfaces differently from those who didn’t. People who grew up with search engines remember things differently — they’re better at knowing where to find information and sometimes worse at retaining it. The technology is inside the people. The people are inside the technology. They’re one system that keeps getting harder to draw a boundary around.

And here’s where it connects to that 4-billion-year pattern we just looked at.

Because if you step back far enough, what you see is that this entanglement isn’t an accident or a modern phenomenon. It’s what the process does. Life absorbs its own inventions. Every time it develops a new information-processing layer, that layer gets folded back into the system and becomes inseparable from it. Chemical signaling started as something cells did — now it’s something cells are. Nervous systems started as optional accessories — now they’re the organizing principle of animal life. Language started as a communication tool — now it restructures cognition at the neural level.

Each time, the new layer starts out looking like something separate — an add-on, a tool, a technique. And each time, given enough time, it merges back into the organism until the boundary between “the thing” and “the thing it invented” becomes meaningless.

The Accountant, at this point, is not just confused about AI. The Accountant is starting to realize that the whole project of keeping clean columns — me here, tools there, biology here, technology there — might have been wrong from the start. The Accountant is having what you might call an existential audit.

What AI accelerates is the pace of this entanglement. Previous technologies changed the brain over generations — slowly, through development and culture. AI is changing how people think in real time, inside individual lifetimes, in ways that are immediate and visceral. The feedback loop between human cognition and its external extensions is getting tighter and faster with every year.

And here’s the part that I think deserves more attention than it gets: this isn’t something being done to us by technology. It’s something we keep choosing. Every generation adopts the tools that extend its cognitive reach, and in doing so, becomes a slightly different kind of cognitive system than the generation before. We’ve been doing this for hundreds of thousands of years. We’re just doing it much, much faster now.

The question isn’t whether biology and technology will merge. They already have. The question is what the merged system becomes next. And where — if anywhere — this 4-billion-year escalation is heading.

Okay But Where Does This Actually Go (The Part That Gets Weird)

I’ve been focused so far on what’s already happening. I want to spend a section on what the trajectory suggests, because I think most people haven’t followed the logic to where it naturally leads, and it leads somewhere genuinely strange.

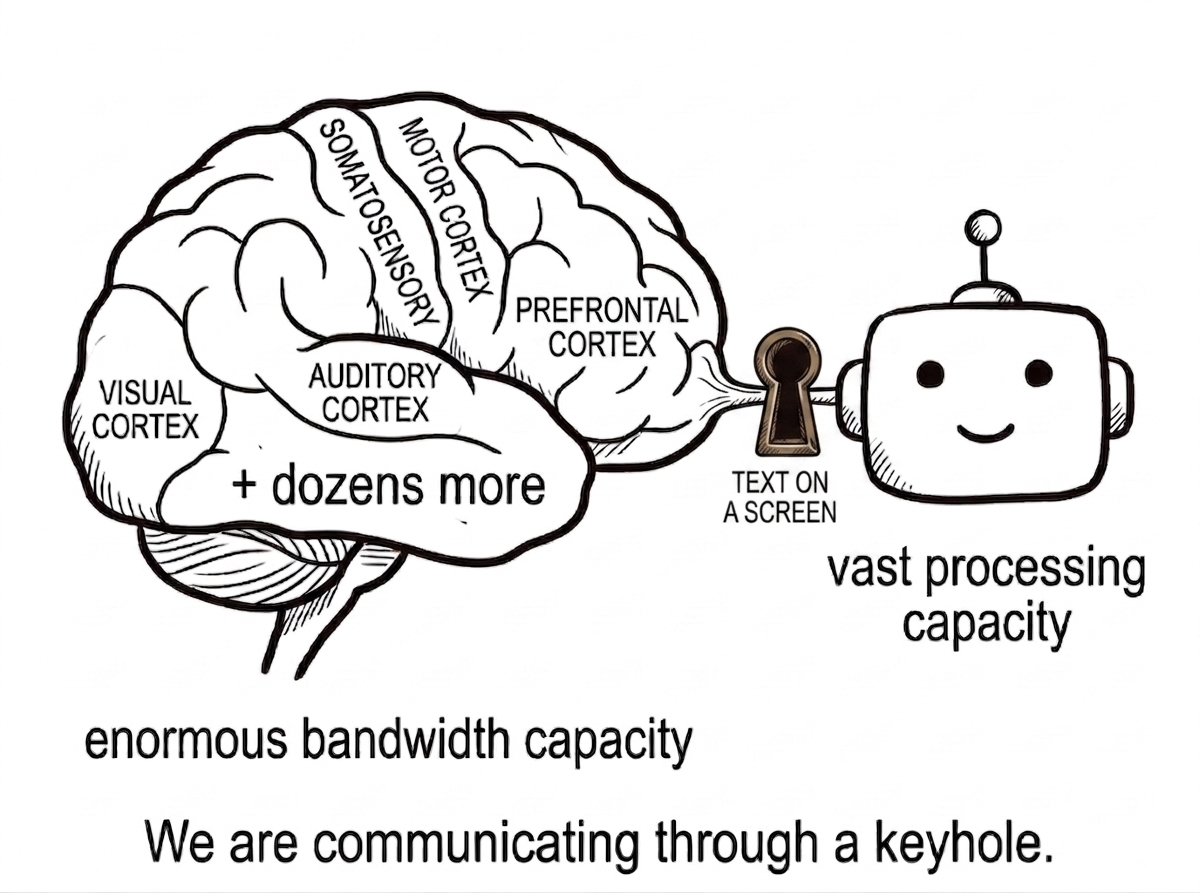

Let’s think about interfaces for a second.

Right now, every interaction between your brain and AI goes through your senses. You read text on a screen. You hear a voice from a speaker. You type with your fingers. You talk with your mouth. The information has to go:

AI system → physical medium (screen, speaker) → sensory organ (eyes, ears) → neural processing → conscious experience.

And going the other direction:

Intention → motor system → fingers/voice → input device → AI system.

This works. It’s what we’ve got. But think about how much of a bottleneck that is.

Your visual cortex can process staggeringly rich information — an entire visual scene, millions of data points, in a fraction of a second. But the pipeline feeding it from AI right now is: a tiny rectangle of text on a glass screen. Your auditory cortex can parse incredibly complex acoustic scenes. But from AI you get: a single voice through a speaker. Meanwhile, touch, proprioception, vestibular sense — the vast majority of your brain’s sensory processing capacity — isn’t even involved.

We’re communicating with AI through a keyhole.

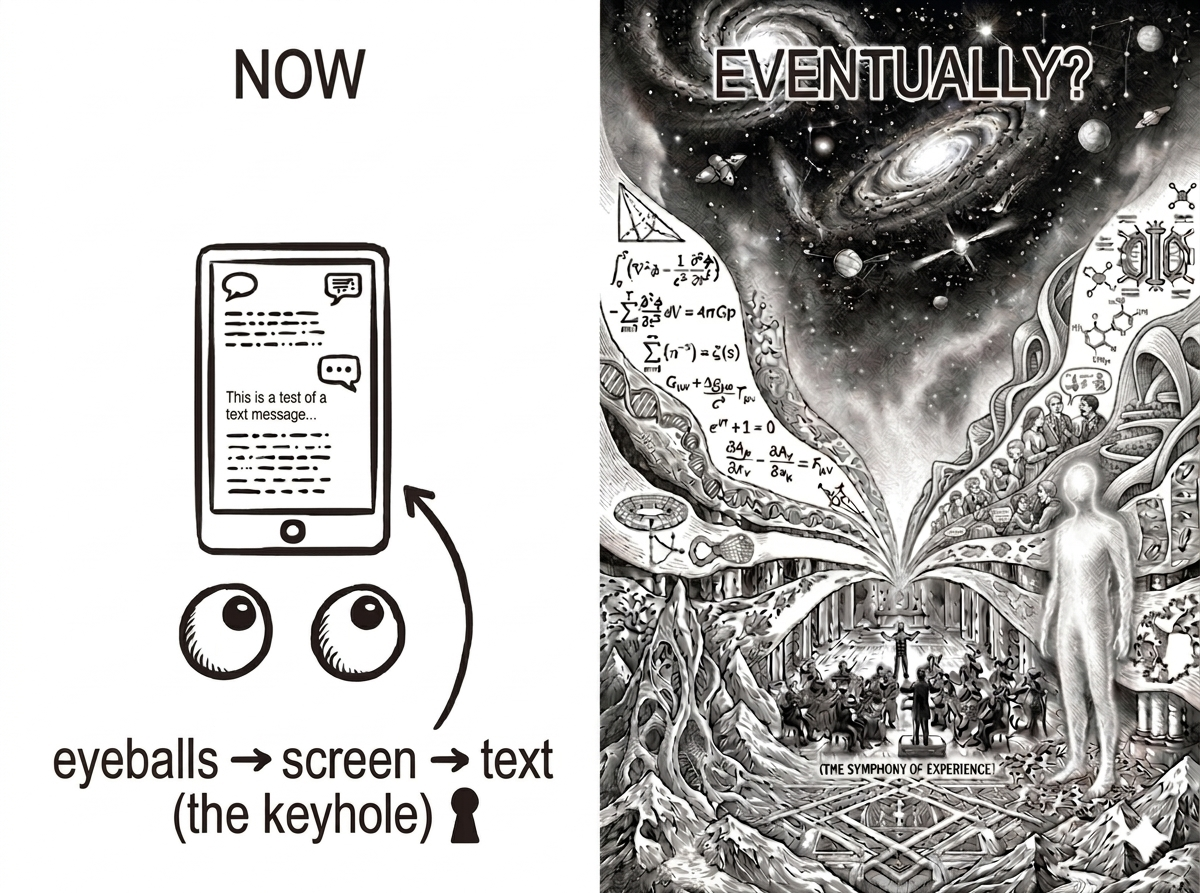

Now. Follow the trajectory.

Every previous generation of interface technology has worked in the same direction: making the pipe wider, bringing technology closer to the neurons. The command line gave way to the graphical interface. The keyboard was joined by the mouse, then the touchscreen, then voice. Virtual and augmented reality headsets gave us stereoscopic vision and spatial audio. Each step took a bigger share of sensory bandwidth and connected it to the digital system on the other side.

But all of these still go through the senses. Light hits retinas. Sound hits eardrums. The body’s sensory organs are still the gatekeepers.

What happens when the pipe bypasses the senses entirely?

This is not science fiction. Not anymore. Right now, in labs and clinics around the world, brain-computer interfaces are getting more precise every year. People with paralysis are controlling cursors and robotic arms with their thoughts. Cochlear implants are converting sound directly into electrical patterns the auditory nerve can interpret. Experimental retinal implants are doing something similar for vision. These are crude — low resolution, limited bandwidth, surgically invasive. But they exist. The principle has been demonstrated: you can put information directly into neural circuitry without going through the body’s sensory organs.

Now imagine that technology 20 years more mature. 50 years. 100 years. Imagine it at the precision of individual neuron clusters. Imagine it non-invasive.

The Accountant just closed the ledger and walked out of the room.

The Keyhole and the Cathedral

Because here’s what that means, if you follow the logic:

Your visual cortex constructs your experience of seeing. Right now, it does this based on signals from your retinas. But the visual cortex doesn’t know or care where the signals come from. It processes patterns. If you could feed it patterns directly — bypass the eyes entirely, stimulate the neural networks that construct visual experience — you would see things that aren’t in front of you. Not imagine them. Not visualize them in the vague way you can close your eyes and picture a beach. Actually see them, with the full phenomenal richness of waking visual experience. Because the experience of seeing is constructed by the cortex, not by the eyes. The eyes are just one input source.

The same logic applies to every other sensory modality. Auditory cortex constructs hearing. Somatosensory cortex constructs touch. The vestibular system constructs your sense of balance and spatial orientation. In each case, the experience is constructed by neural networks, and the sensory organs are the current — but not the only possible — source of the raw signals those networks process.

If you can write to those networks directly, with sufficient precision, you can construct any experience. A simulation rendered with your very own neurotransmitters. Not an approximation. The real thing — because the experience was always a construction anyway.

I realize I’ve just described the Matrix. But the Matrix got something fundamentally wrong. In that movie, the simulated experience is a prison — a lie imposed by machines to keep humans docile. The framing is adversarial. Evil AI trapping helpless humans in a fake world.

That’s Model 1 thinking. “Human vs. AI.” And it misses the much more interesting and realistic version of the same basic technology.

Think about it through the Model 3 lens instead. Not a prison but an expansion. Not a replacement for reality but an extension of it. A collaboration between your brain’s extraordinary ability to construct conscious experience and a technology that gives it vastly more material to work with.

You could experience a lecture as a walk through a three-dimensional landscape of the concepts being discussed — abstract ideas rendered as spatial relationships your visual cortex can parse intuitively. You could experience a piece of music not just as sound but as a full-body somatic event — every instrument a different texture of physical sensation. You could experience a conversation with an AI not as text on a screen but as a presence — a voice in a shared space, with all the social and emotional cues that embodied interaction provides.

The bandwidth of the cognitive partnership goes from keyhole to cathedral.

Now. I want to be careful here. Because there’s a version of this that’s utopian nonsense, and I don’t want to sell that.

I don’t know if this is where we’re going. The neuroscience is hard. The engineering is harder. The ethical questions are the hardest of all — who controls the signals? Who decides what experiences get streamed to whose neurons? What happens to shared reality when individual experience becomes fully programmable? These are not small questions, and we don’t have good answers to any of them.

But here’s what I do think is worth sitting with: the trajectory points here. Not because anyone planned it, but because the pattern we’ve been watching for 4 billion years — new layer, tighter coupling, more integrated system — doesn’t have an obvious stopping point. The distance between “thinking” and “receiving input from the technology” keeps shrinking.

At some point, if the trend continues, that distance reaches zero. The technology doesn’t communicate with the brain through the senses. It communicates as the senses. It becomes another signal source in the neural orchestra that constructs your moment-to-moment conscious experience.

And at that point, The Accountant doesn’t just close the ledger. The Accountant throws it in the fire. Because the distinction between “internal” and “external” cognition has become truly, irreversibly meaningless. The technology isn’t outside you. It isn’t inside you. The question itself has dissolved.

The planet’s 4-billion-year project of producing ever more capable intelligence-processing systems hasn’t stopped. It’s just entered a phase where the next layer won’t be biological at all — and the interface between the biological layers and the technological ones is approaching something like transparency.

I find this one of the most genuinely stunning things I’ve ever thought about. I also find it terrifying. Both feelings seem correct.

The Honest Part (Where I Tell You What I Don’t Know)

I’ve been building a case, but I want to be clear about where I run out of ground to stand on.

I don’t know where this goes. The trajectory seems to point toward tighter integration — AI that’s faster, more fluid, more deeply woven into how we think and work. But predictions about the pace and character of technological change are mostly just confident-sounding guessing. I’m doing some of that. So is everyone.

I don’t know if the extended mind framing is right. Clark and Chalmers’s paper has been debated for nearly thirty years. Plenty of smart people think it’s confused. I find it compelling, but I’m not a philosopher of mind, and I’m definitely leaving out objections.

I honestly don’t know what it means for identity. If your thinking increasingly happens in collaboration with external AI systems — if your plans, drafts, analyses, decisions are all products of a human-AI loop — what does that do to the experience of being a self? Does it matter? Does it feel different? I think it probably does feel different, and I think we don’t have good language or frameworks for those feelings yet. The Accountant is not the only one struggling. I am too.

I don’t know if the trajectory continues. I just described a path toward direct neural interfaces, experience streamed to cortex, the dissolution of the boundary between biological and technological cognition. It’s possible that path runs into hard limits — neuroscientific, engineering, political, ethical — that slow it down or stop it entirely. It’s possible we hit a plateau and the interface stays roughly where it is: screens and voices and keyboards. But it’s also possible it doesn’t. And I notice that throughout history, the people who bet against tighter coupling between humans and their cognitive tools have consistently been wrong.

I don’t know if this is good or bad and I’m suspicious of people who are very sure either way. Genuinely transformative shifts in how humans think and know tend to produce both wonderful things and terrible things, often in ways that weren’t predicted, on timelines that weren’t expected. Writing made us collectively smarter and also made propaganda possible at scale. The printing press accelerated the Reformation and also thirty years of religious wars. The internet connected us and also did — gestures broadly at everything.

I’m not saying it all comes out in the wash. I’m saying that if you’re certain about how this turns out, you’re probably not taking the uncertainty seriously enough.

What I Think Is Actually Happening

Here’s where I land, at least today.

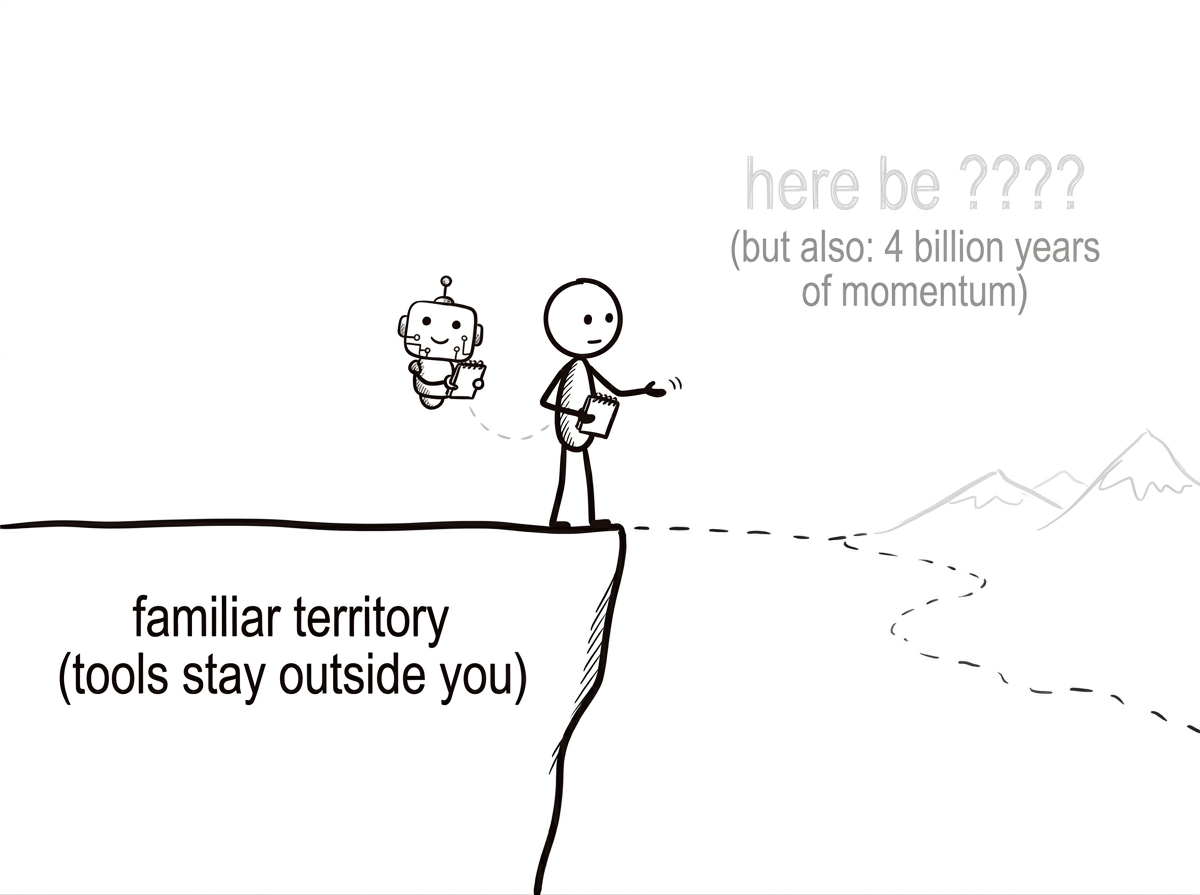

Something is ending. The age of tools as purely external, inert, dumb things that wait for humans to direct them — that age is closing. It was a long age. Almost all of human history. We got comfortable in it. The Accountant built a very tidy set of books around it.

Something is beginning. It doesn’t have a name. It involves human intelligence and machine intelligence operating in close enough coupling that the output can’t easily be attributed to either alone. It’s not what science fiction predicted. It’s not a war, and it’s not a transcendence. It’s more like a new kind of cognitive arrangement — messy, practical, evolving, unprecedented.

And the thing is: this new arrangement is already here. It’s not coming. It arrived, quietly, through apps and tools and workflows and AI agents that people started using to get things done, without anyone giving a press conference about the philosophical implications. The Singularity didn’t arrive with a bang, but with a software update we all agreed to in our sleep.

Most people are in Model 2 — telling themselves they’re just using a very smart tool, nothing fundamentally changed. I think Model 2 is increasingly a polite fiction we tell ourselves because Model 3 requires updating things that feel very fundamental. And updating fundamental things is uncomfortable.

But uncomfortable doesn’t mean wrong.

And it’s worth remembering: this isn’t new. Not really. It’s the latest episode in a story that’s been running for 4 billion years on this planet — a story about matter organizing itself into increasingly capable systems for processing information. About layers accreting on top of layers. About each layer integrating so deeply with the previous ones that you can’t separate them without destroying both.

We are the current episode. Not the last one.

The Accountant is sitting in the ruins of the old filing system, surrounded by ledger pages, staring at a wall. The columns don’t work anymore. “Me” and “not me.” “Inside” and “outside.” “Biology” and “technology.” The categories held for a long time. They don’t hold now.

The Accountant, if I’m being honest, is going to need a whole new system.

The hammer stayed outside you. You put it down at the end of the day. It didn’t dream about the nails.

What comes next doesn’t stay outside. It’s learning to think with you. It’s getting better at it fast. And one day — maybe not in our lifetimes, maybe sooner than we think — the distance between “thinking” and “thinking with” might shrink to nothing at all. The signal goes directly to the cortex. The experience is seamlessly woven into consciousness. The boundary between the thinker and the thing it thinks with becomes as meaningless as the boundary between your neurons and your gut bacteria.

The questions this raises — about mind, about self, about what thinking even is, about what this planet has been building toward for 4 billion years — are among the most interesting and important questions I can imagine.

We should probably start taking them seriously.

If this post made you think about something differently, or if you think I’ve got it completely wrong, I want to know.

Go tell someone.

Share this essay